Selected Response

Selected Response

As the name implies, selected-response items are those in which students read a question and are presented with a set of responses from which they choose the best answer. In other NAEP assessments, selected-response items most often take the form of multiple-choice items, in which students select an answer from, say, four options provided. The choices include the most applicable response—the "answer"—as well as three "distractors." The distractors should appear plausible to students but should not be justifiable as a correct response, and, when feasible, the distractors should also be designed to reflect current understanding about students' mental models in the content area. The NAEP Technology and Engineering Literacy Assessment will include such multiple-choice items within both the scenario-based and the discrete-item assessment sets as one type of selected response.

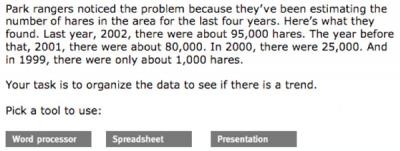

In addition to the conventional multiple-choice selected-response items, the scenario-based sets in the NAEP Technology and Engineering Literacy Assessment will include other types of selected-response formats. The computer-based nature of the scenarios will allow a variety of types of student selections to be measured. For example, a student might be given a task to perform and asked to select an individual tool from a set of virtual tools. When a student selects a tool by clicking on it, it provides a measurable response that is, in essence, a selected response.

A selected-response item in such a scenario might have fewer choices than in a conventional multiple-choice item. For example, a student might select between two alternatives, such as deciding whether a switch in a circuit should be open or closed in order to produce a particular outcome, but the student might also have to justify or explain the selection. In this case, the first part of the answer is a selected-response, but it might be necessary to score the two parts of the item together so that the selection and justification together determine the score. In complex, real-world scenarios, it might be the case that there is not a "correct" selection, and in such a situation what matters is that the selection is justified adequately.

An example of a selected-response item that might be part of a series of items embedded in a scenario-based assessment set is shown on the next page. The question was part of an ICT assessment about reintroducing lynx into a Canadian park overrun by hares. The assessment was designed for 13 year-olds.

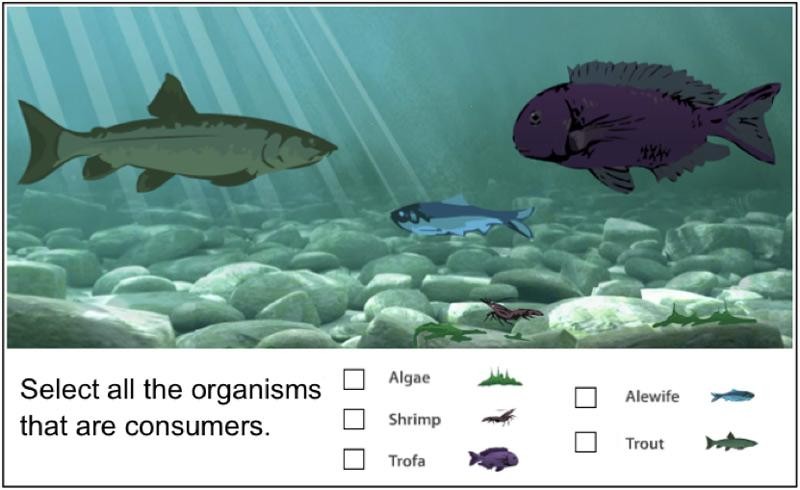

Other selected-response types within a scenario-based assessment set might include a task in which a student selects all of the options that apply from a given set of choices. Again, in a real-world situation there might be one "best" combination of choices but also one or more other combinations that are partially correct. In such a situation, it makes sense to use a scoring rubric that rewards different combinations of selected response items with different scores.

In the following item, students observe organisms interacting in an ecosystem before choosing all of the organisms that are consumers. The assessment was designed for grade 8. In the NAEP Technology and Engineering Literacy Assessment, students might choose all of the organisms in an ecosystem most immediately affected by a pollutant.

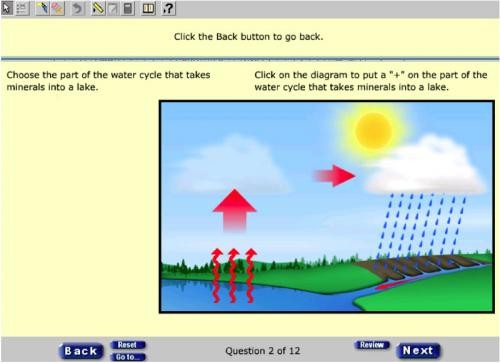

Another form of selected response is a "hot spot" in which a student answers by clicking on a spot on an image such as a map, picture, or diagram. The example below was designed for grade 8 students as part of a set of items on a state science test. For the NAEP Technology and Engineering Literacy Assessment, a task might be designed that asks students to click on the places in the image where pollution originated in the water system.