Descriptions of the Response Types Used in the Assessment Sets

Descriptions of the Response Types Used in the Assessment Sets

In conventional items on previous NAEP assessments, students have responded either by selecting the correct response from among a number of choices or else by writing a short or long text-based response to the questions posed. In the computer-based NAEP Technology and Engineering Literacy Assessment, with its scenario-based assessment sets, there are opportunities to greatly extend the ways in which a student can respond to an assessment task. Thus this assessment can move beyond the old ways of thinking of response types in terms of simply multiple choice or written responses and begin to consider new types of responses.

In this assessment, three response types are used: short constructed response, long constructed response, and selected response. Although these are the same names as used in other NAEP assessment frameworks, in the context of the NAEP Technology and Engineering Literacy Assessment they have different and expanded meanings. These meanings are described in the following sections.

Constructed Response

Constructed responses are ones in which the student "constructs" the response rather than choosing a response from a limited choice of alternatives, as is the case with selected response items. Constructed responses in the NAEP Technology and Engineering Literacy Assessment will include short constructed response tasks and items as well as extended constructed response tasks and items. These are described in detail in the following sections.

Short Constructed Response

Short constructed responses might be used in either the discrete-item assessment sets or in the scenario-based assessment sets. They generally require students to do such things as supply the correct word, phrase, or quantitative relationship in response to the question given in the item, to identify components or draw an arrow showing causal relationships, to illustrate with a brief example, or to write a concise explanation for a given situation or result. Thus students must generate the relevant information rather than simply recognize the correct answer from a set of given choices, as is the case in selected response items. When used as part of a discrete item set, all of the background information needed to respond is contained within the stimulus material.

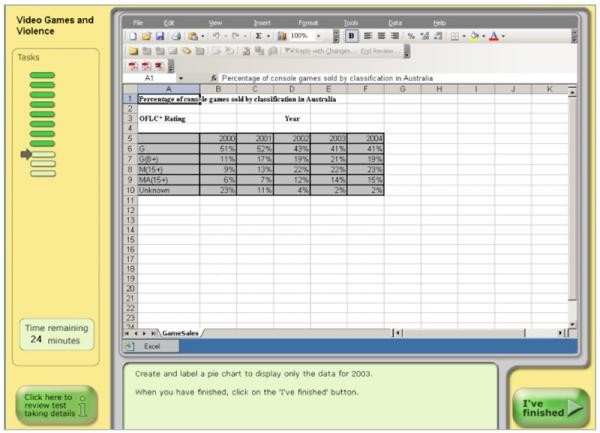

The following is an example of a short constructed response item that might be used in a discrete item set. In this computer-based item, students use a spreadsheet program to create a pie chart.

Extended Constructed Response

Extended constructed responses will be used in the long scenario-based assessment sets. In a scenario-based assessment set, the real-world scenario is developed and elaborated on as the student moves through the assessment set. As previously described, the introduction of the scenario will provide context and motivation for the tasks in the assessment set. As the scenario builds, the student undertakes a series of tasks that combine to create the response. For example, a student might be asked to enter a search term to gather information about a famous composer and to request information from virtual team members. Students could vary the size of populations to test a model of a city's transportation system, or, in a different scenario, they might be asked to construct a wind turbine from a set of virtual components in which there are several combinations of turbine blades and generators.

Additional measures of the students' responses can be made by capturing data about which combinations of components the students selected, whether they covered all possible combinations, and what data they chose to record from their tests of the components. A follow-on task might ask the students to select different types of graphic representations for the tabulated data they captured. Observing whether they have selected an appropriate type of graph provides additional information about how they use data analysis tools.

Finally, the students could be asked to interpret their data, make a recommendation for the best combination of turbine blade and generator, and justify their choice in a short written (typed) response. In this way, both the task and the response are extended.

Thus, unlike short constructed response items in which all the information to answer a particular task is contained in a single stimulus, the information necessary to answer an extended constructed-response is contained in several parts of the overall task. In this example, it would not be possible for a student to make recommendations about which combination of blades and generators is best without having done all parts of the previous tasks.

Designing extended constructed response tasks presents certain challenges. Enough information must be provided in the scenario to allow the student to perform well-defined, meaningful tasks that yield measurable evidence about whether the student possesses the knowledge and skills defined in the assessment targets. Another challenge is to ensure that the dependencies among the tasks that a student performs within an extended response are minimized. For example, in the wind turbine scenario described above, students could run tests of combinations of certain turbine blades and generators, and their responses could be assessed. Then, the students could be given data from another set of tests of different blades and generators that someone else did and asked to interpret those data. In this way the dependency between a student's own data gathering and the data analysis stage is minimized. The goal is to make sure that mistakes or deficiencies in the first part of the task are not carried forward into the second task, thereby giving all students the same opportunity to show their data analysis skills, regardless of how well they did on the first task.

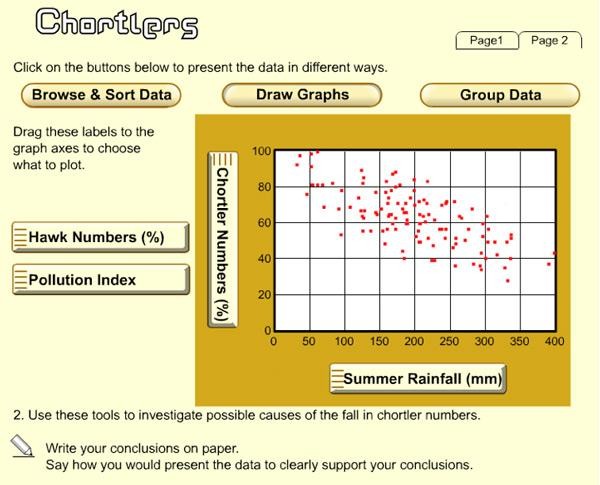

In the following simulation, students are given the scenario of a population of small birds—chortlers—whose population is declining. The students are asked to use various tools to analyze data in a variety of ways and to determine some possible causes for the population decrease so that they can then present their findings on the impacts on the chortlers. The multiple developments of the graphs are extended constructed responses, as is the development of the conclusions to be presented, which in this example are not written on the computer.

Extended responses can provide particularly useful insight into a student's level of conceptual understanding and reasoning. They can also be used to probe a student's capability to analyze a situation and choose and carry out a plan to address that situation, as well as to interpret the student's response. Students may also be given an opportunity to explain their responses, their reasoning processes, or their approaches to the problem situation. They can also be asked to communicate about the outcomes of their approach to the situation. Care must be taken, however, particularly with fourth-graders and English language learners, that language capability is not confounded with technology and engineering literacy.